Import numpy as functionType=PandasUDFType.GROUPED_AGG) Since Spark 2.4.0 you are also provided with GROUPED_AGG variant, which takes Callable, T], where T is a primitive scalar: Result.reset_index(inplace=True, drop=False) Result = pd.DataFrame(df.groupby(df.key).apply( ,Īnd you want to compute average value of pairwise min between value1 value2, you have to define output schema:įrom import pandas_udfįrom import functionType=PandasUDFType.GROUPED_MAP) GROUPED_MAP takes Callable, pandas.DataFrame] or in other words a function which maps from Pandas DataFrame of the same shape as the input, to the output DataFrame.įor example if your data looks like this:

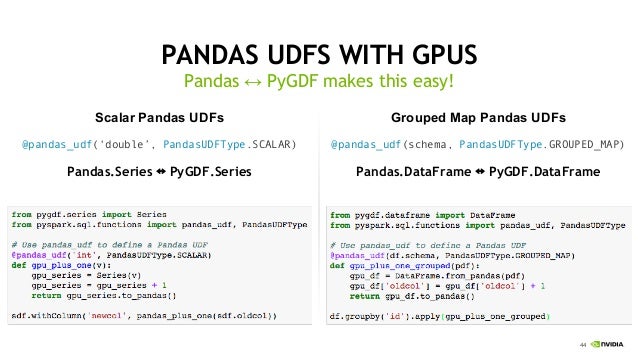

Under the hood it vectorizes the columns, where it batches the values from multiple rows together to optimize processing and compression. Also, some nice performance improvements have been seen when using the Panda's UDFs and UDAFs over straight python functions with RDDs. PySpark added support for UDAF'S using Pandas. The only limitation here is tha collect_set only works on primitive values, so you have to encode them down to a string.įrom import col, collect_list, concat_ws, udfĭf.withColumn('data', concat_ws(',', col('B'), col('C'))) \ Then go ahead, and use a regular UDF to do what you want with them. Try to use collect_set to gather your grouped values. Unfortunately, there is currently no way in Python to implement a UDAF, they can only be implemented in Scala.īut,there is a workaround for this in Python. #Pyspark udf example how toUDAF functions works on a data that is grouped by a key, where they need to define how to merge multiple values in the group in a single partition, and then also define how to merge the results across partitions for key.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed